What Are Approximation Algorithms?

The NP-Hard Problem

An optimization problem asks us to find the best solution from a (usually enormous) set of candidates — the shortest tour, the smallest cover, the most valuable packing. For problems in P, exact optimal solutions can be found in polynomial time. For NP-hard optimization problems, no polynomial-time exact algorithm is known, and widely believed complexity theory suggests none exists.

Faced with an NP-hard optimization problem, three responses are available: restrict inputs to tractable special cases, accept exponential runtime for small instances, or give up on optimality and settle for a solution that is provably “close enough.” The third path is the domain of approximation algorithms: efficient procedures that sacrifice exactness in exchange for a guaranteed bound on how far from optimal their output can be.

Key property: Unlike heuristics, approximation algorithms come with a proof. The guarantee holds for every instance, not just on average or in practice. This makes them analytically rigorous tools, not guesses.

Approximation Ratio — The Performance Guarantee

Formal Definition

An algorithm A is a ρ-approximation algorithm for an optimisation problem if, for every input instance I, the cost C of A's output satisfies:

C ≤ ρ ⋅ OPT (minimisation) or C ≥ OPT / ρ (maximisation)

The ratio ρ ≥ 1 is called the approximation ratio or performance guarantee. It is a worst-case bound that holds for every instance, regardless of size or structure. A smaller ρ is better. When ρ = 1 + ε for any ε > 0 chosen by the user (at the cost of a slower runtime), the algorithm family is called a Polynomial-Time Approximation Scheme (PTAS).

A critical observation: All NP-complete decision problems are equivalent under polynomial reductions, so NP-completeness theory gives us no way to distinguish them in difficulty. Approximation theory reveals fine-grained structure: vertex cover and maximum clique are both NP-hard, yet vertex cover admits a constant-factor approximation while maximum clique is provably inapproximable within any polynomial factor.

Case Study: Vertex Cover — A 2-Approximation

The Matching-Based 2-Approximation

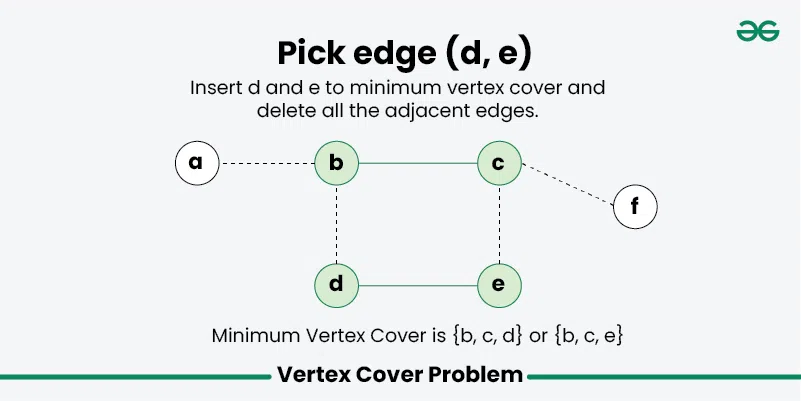

Problem: Given an undirected graph G = (V, E), find the smallest set C ⊆ V such that every edge has at least one endpoint in C (a vertex cover). This is NP-hard.

Algorithm: Repeatedly pick any uncovered edge (u, v), add both u and v to the cover, and remove all edges incident to either. Return C when no edges remain. Runtime: O(V + E).

Why it gives a 2-approximation: The edges the algorithm picks form a maximal matching M — no two selected edges share a vertex. Any vertex cover must include at least one endpoint of every edge in M, so OPT ≥ |M|. The algorithm includes both endpoints of each edge in M, giving |C| = 2|M| ≤ 2 ⋅ OPT. The ratio of 2 is the best known for vertex cover; improving it would be a major result.

Case Study: Metric TSP — The Christofides 1.5-Approximation

Why TSP Approximability Depends on the Metric

General TSP: For arbitrary edge weights, no polynomial-time ρ-approximation exists for any constant ρ unless P = NP. The proof uses a reduction from Hamiltonian cycle: if an approximation existed, it could decide Hamiltonian cycle in polynomial time, which is NP-complete.

Metric TSP (where edge weights satisfy the triangle inequality): Christofides' algorithm (1976) achieves a 1.5-approximation in polynomial time. It builds a minimum spanning tree (a lower bound on OPT), finds a minimum-weight perfect matching on the odd-degree vertices, combines them into an Eulerian circuit, and shortcircuits repeated vertices. The 1.5 ratio follows from the fact that the matching costs at most OPT/2 and the MST costs at most OPT.

Recent progress: In 2021, Karlin, Klein, and Oveis Gharan improved the ratio to 1.5 − 10−36 — the first improvement in nearly 50 years. The current best inapproximability lower bound is 123/122 ≈ 1.008, leaving a gap that remains open.

Approximation Schemes: Getting Arbitrarily Close

The Knapsack PTAS

The 0/1 Knapsack problem — given items with weights and values, fill a capacity-W knapsack to maximise value — is NP-hard. However, it admits a Polynomial-Time Approximation Scheme: for any ε > 0 chosen by the user, there is an algorithm that runs in time O(n3/ε) and returns a solution with value at least (1 − ε) ⋅ OPT.

The technique — value rounding: Round all item values down to the nearest multiple of K = ε ⋅ vmax / n, where vmax is the maximum item value and n is the number of items. This reduces the range of distinct values to O(n/ε), making dynamic programming tractable in polynomial time. The rounding introduces at most ε relative error in the optimum.

The trade-off: Smaller ε gives a better approximation but longer runtime. This trade-off is inherent: if runtime polynomial in 1/ε were not needed, the problem would be solvable exactly in polynomial time. The existence of a PTAS does not contradict NP-hardness — it means the problem becomes tractable when we accept a small, user-chosen error.

Key Approximation Results at a Glance

Design Strategies

Greedy Algorithms

Make locally optimal choices at each step. Often yield logarithmic approximations for covering problems (Set Cover: greedily pick the set covering the most uncovered elements). Simple and fast, but the ratio is determined by the problem's structure.

LP Relaxation + Rounding

Formulate the problem as an integer linear program, solve the LP relaxation in polynomial time, then round the fractional solution to an integer one. Vertex Cover's LP gives a 2-approximation by rounding every variable ≥ 0.5 up to 1. The integrality gap of the LP bounds the achievable ratio.

Primal–Dual Schema

Simultaneously construct a feasible primal solution and a dual solution that witnesses a lower bound on OPT. The approximation ratio follows from comparing the two. Used in Shortest Path, Steiner Tree, and generalised matching problems.

Dynamic Programming + Rounding

Round or scale problem parameters to reduce the state space of DP to polynomial size, introducing controlled error. The basis of PTAS designs for Knapsack, Subset Sum, and Scheduling. The approximation ratio trades off with runtime via the error parameter ε.

Resources & Further Reading